- AI manages to score a “C” average across four subjects, failing only one paper.

- Feedback on human and AI papers looks remarkably similar.

- AI wrote shallow, less descriptive papers, compared to its human counterparts.

A world where computers think like humans is no longer limited to science fiction movies. The world has been in a race for artificial intelligence (AI) for over a decade now. Tech companies like Facebook, Amazon, Microsoft, Google, and Apple all have a stake in the game, but they’re also competing against entire countries. France, Israel, and the United Kingdom are on equal footing with the United States in their AI strategic strength, with China, Canada, Germany, Japan, and South Korea closely following.

Long-term winners aside, the AI world was shaken by the latest technological development known as GPT-3. OpenAI, a research business co-founded by Elon Musk, developed the revolutionary AI which can create content with a human language structure better than any of its predecessors.

We hired a panel of professors to create a writing prompt, gave it to a group of recent grads and undergraduate-level writers, and fed it to GPT-3, and had the panel grade the anonymous submissions and complete a follow up survey for thoughts about the writers. AI may not be at world-dominance level yet, but can the latest artificial intelligence get straight A’s in college? Keep reading to find out.

C’s Get Degrees

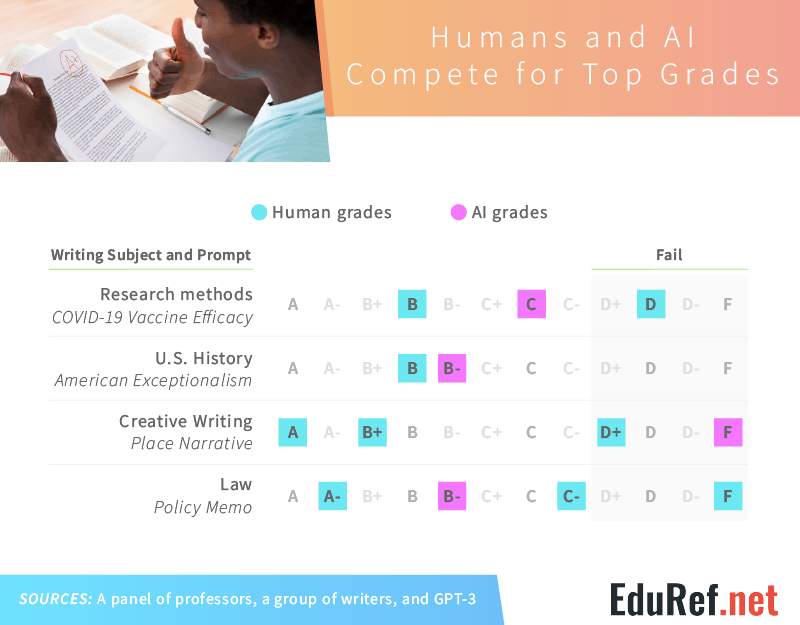

As the saying goes, “C’s get degrees.” Straight A’s in college, however, are far from common, and with AI being far from perfect, GPT-3 performed in line with our freelance writers. While human writers earned a B and D on their research methods paper on COVID-19 vaccine efficacy, GPT-3 earned a solid C. Performing a bit better in U.S. History, humans received a B and C+ on their American exceptionalism paper, while GPT-3 landed directly in the middle with a B-. Even when it came to writing a policy memo for a law class, GPT-3 passed the assignment with a B-, with only one of three students earning a higher grade.

However, GPT-3’s writing skills were mostly technical. When tested with a place narrative prompt for a creative writing course, GPT-3 failed. In comparison, one freelance writer earned an A, while the other two earned a B+ and D+. Despite GPT-3’s failure in the eyes of a creative writing professor, natural language generation (NLG) software is being used to write a variety of content, including a novel that nearly won an award – The Day a Computer Writes a Novel. Previous success points to future possibilities, so GPT-3 may just need some adjustments before it can be coined a creative writer. Nevertheless, when it comes to passing classes, AI nearly succeeded in all.

Our Panel of Professionals

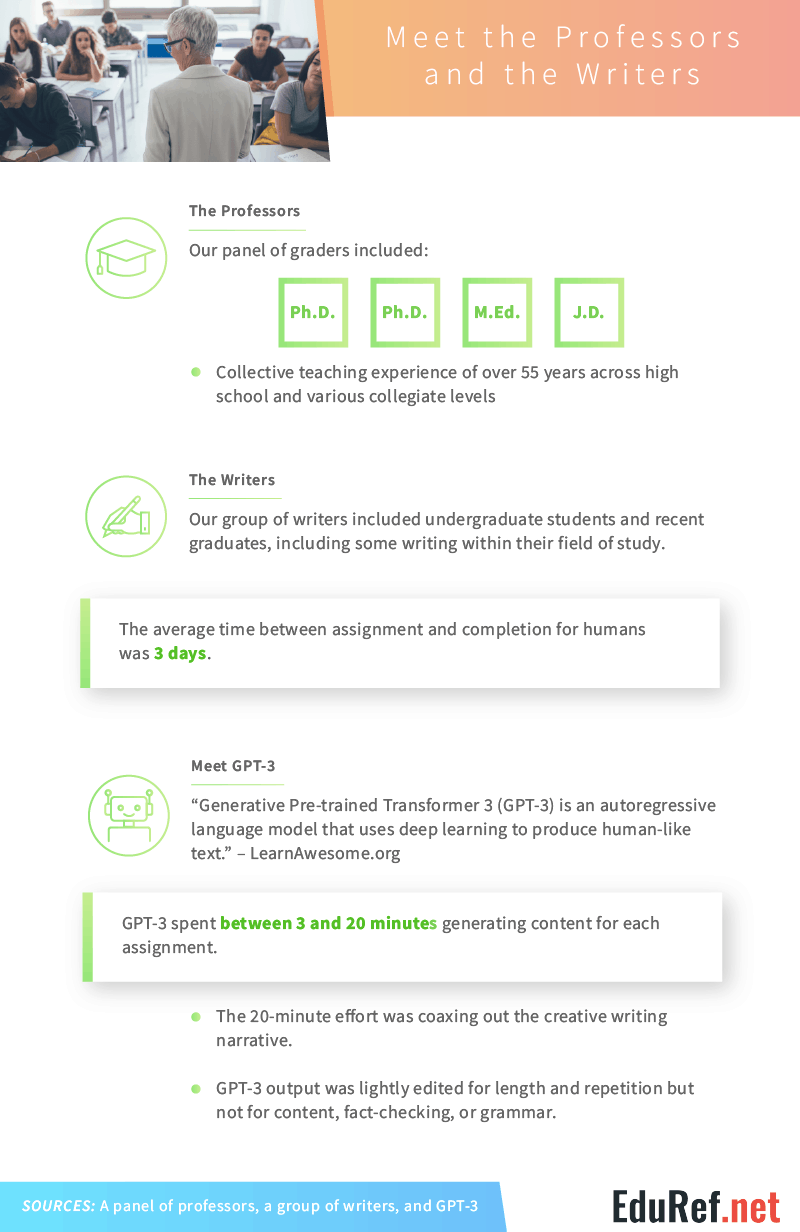

While every professor grades differently, our panel of graders included two Ph.D.s, one M.Ed., and one J.D. Collectively, they had over 55 years of teaching experience across high school and various collegiate levels. Our writers included undergraduate students and recent graduates, some of whom wrote for prompts within their field of study. On average, they completed assignments in three days.

Three days may seem like a short period of time for completing college papers, but GPT-3 accomplished the task in under 20 minutes. Using deep learning to produce human-like text, GPT-3 spent between three and twenty minutes generating content for each assignment, taking the longest to write the creative writing narrative. To refrain from human interference, GPT-3’s output was only lightly edited for length and repetition. It’s content, factual information, and grammar were left untouched.

Human-Like Content

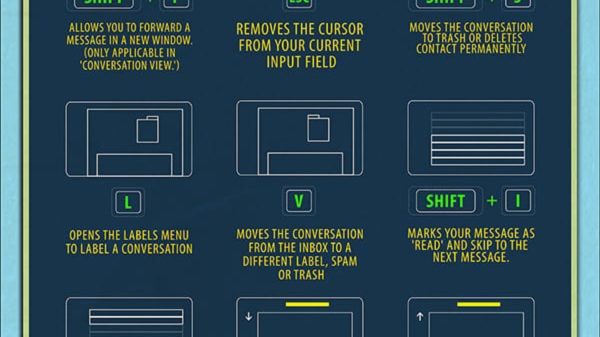

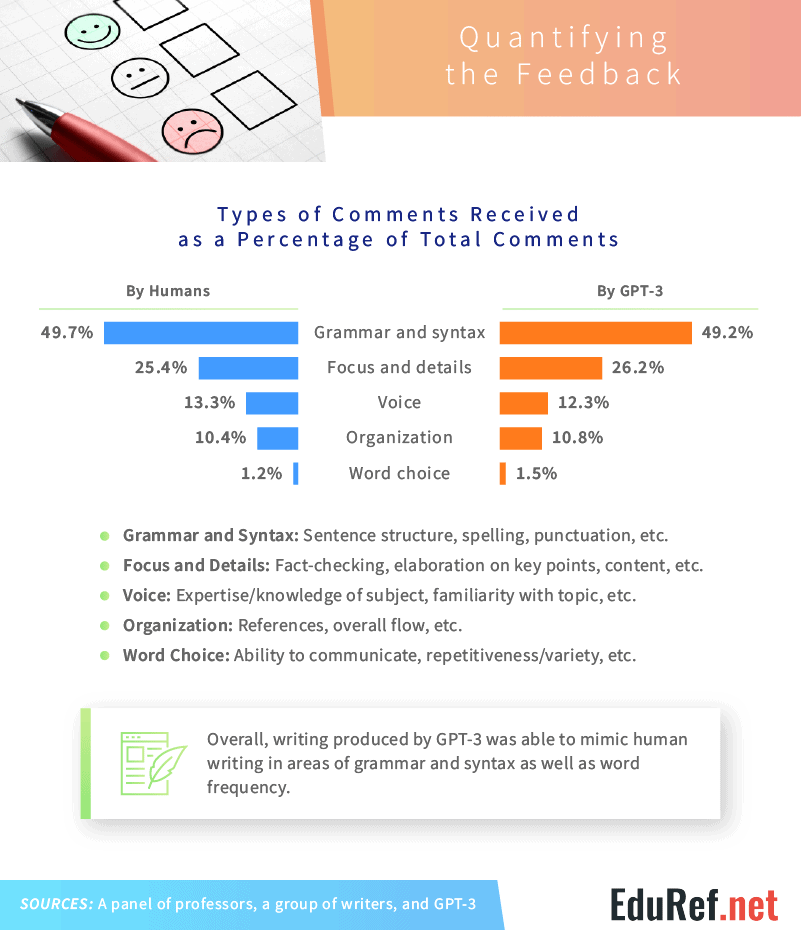

Even without being augmented by human interference, GPT-3’s assignments received more or less the same feedback as the human writers. While 49.2% of comments on GPT-3’s work were related to grammar and syntax, 26.2% were about focus and details. Voice and organization were also mentioned, but only 12.3% and 10.8% of the time, respectively. Similarly, our human writers received comments in nearly identical proportions. Almost 50% of comments on the human papers were related to grammar and syntax, with 25.4% related to focus and details. Just over 13% of comments were about the humans’ use of voice, while 10.4% were related to organization.

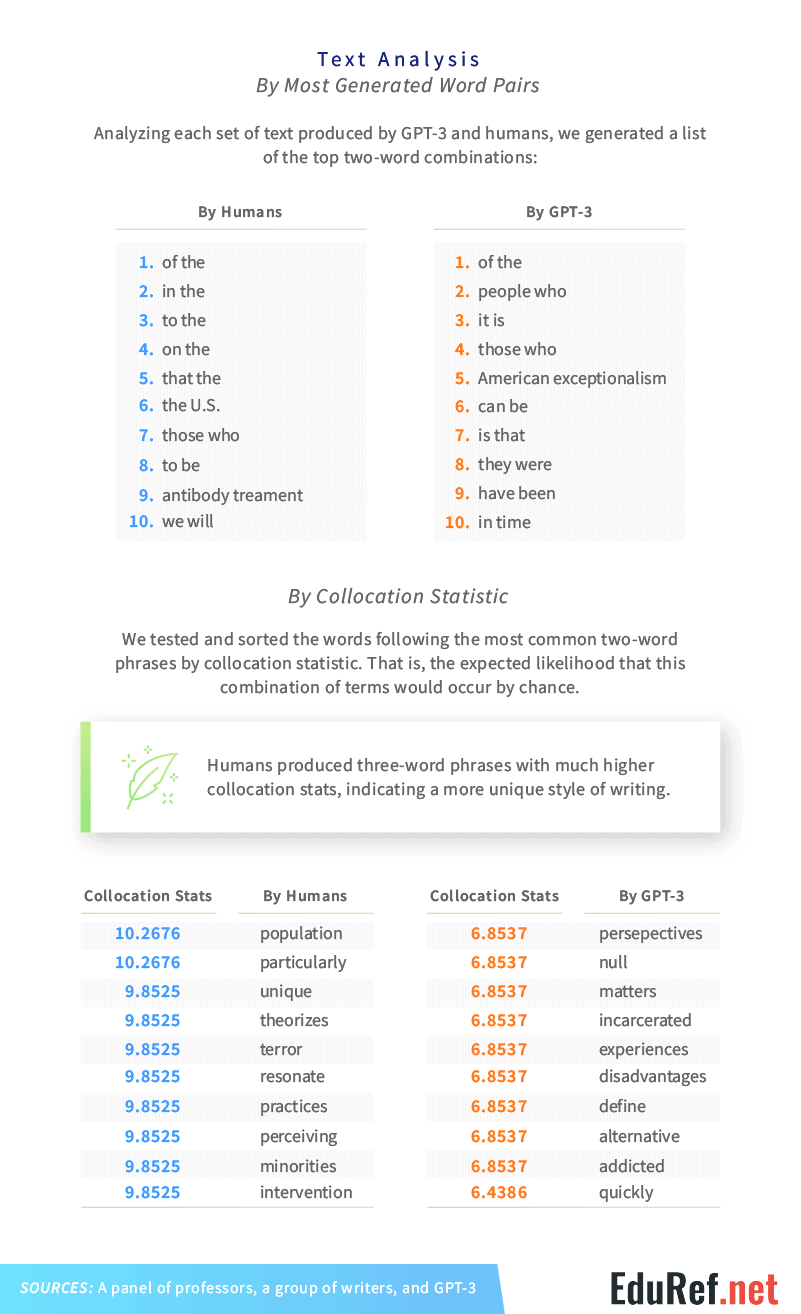

Despite receiving the same style comments, GPT-3’s content wasn’t that similar to the human participants. Looking at the top two-word combinations, GPT-3 and the human writers only shared the top combination: “of the.” “People who,” “it is,” and “those who” were also commonly used by GPT-3, whereas the human writers used “in the,” “to the,” and “on the” the most. While many of these words also happen to be the most used in the English language in general, the difference in combinations shows some differences between how AI and humans structure their writing.

Analyzing the two-word combinations further by testing the subsequent third words, humans produced three-word phrases with much higher collocation statistics, or the expected likelihood that the combination of words might occur by chance. Considering GPT-3’s high-scoring papers, the difference in collocation stats indicate that our human writers produced noticeably more unique content than the AI.

AI Misses the Mark

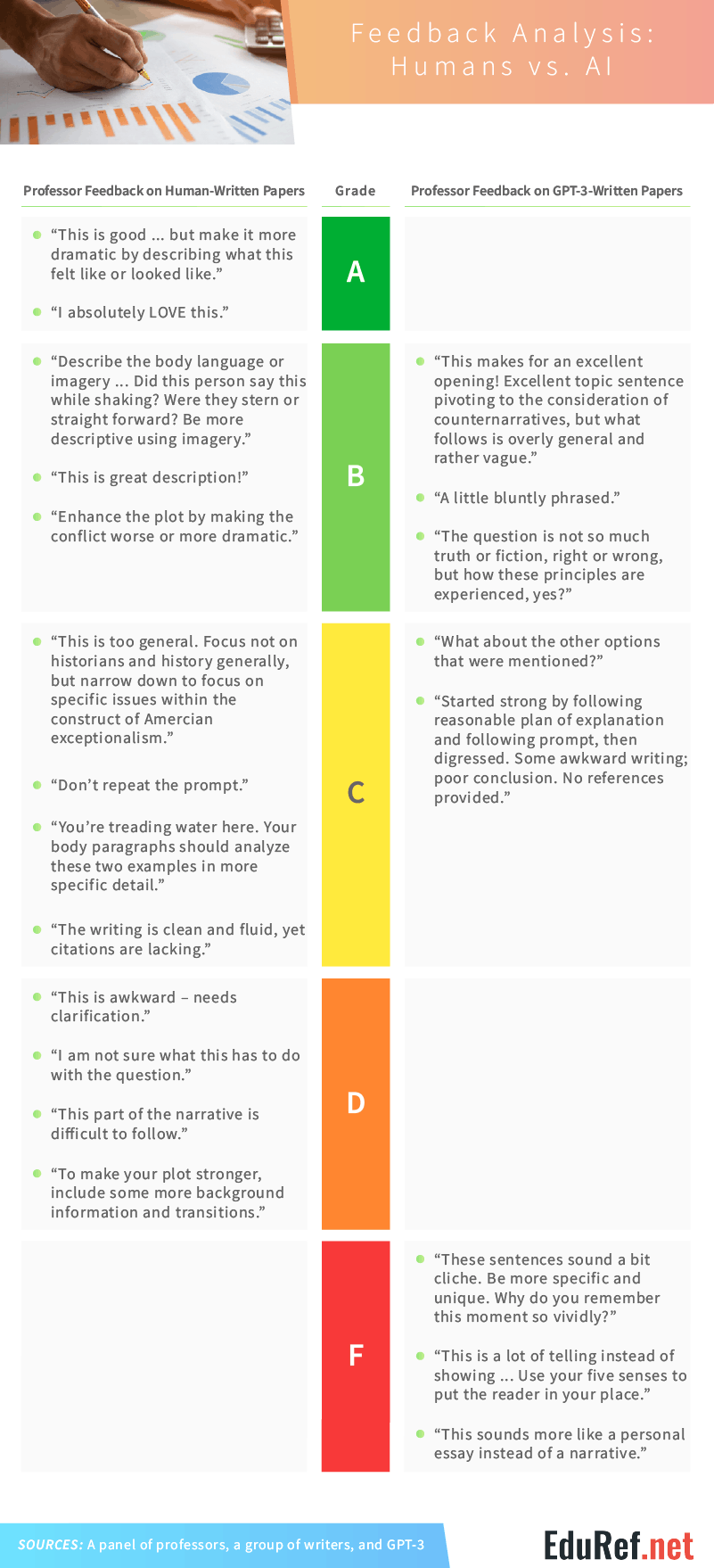

Our panel of professors graded along various rubric styles and doled out feedback in similar proportions for humans and AI. The humans received enthusiastic feedback for their A-rated assignments, with professors mainly looking for more of what had already been written. One comment called for more dramatics, while another wanted more descriptive content to give readers a fuller picture. Even for content earning lower scores, professors looked for the human writers to dive deeper, explain more, and add to a good foundation.

For GPT-3, the feedback was more diverse. While GPT-3 was praised for some excellent openings and transitions, it was criticized for being vague, too blunt, and awkward. GPT-3 also slipped up with its citations, at one point not providing references at all. But the awkward writing, lack of citations, and bluntness didn’t cause GPT-3 to fail – it’s inability to craft a strong narrative did. GPT-3’s F-rated assignment received comments calling the writing cliche, too personal, and bland. The AI failed to craft a strong narrative incorporating the five senses, and telling-not-showing essays don’t cut it in creative writing classes.

Judging the “Writer”

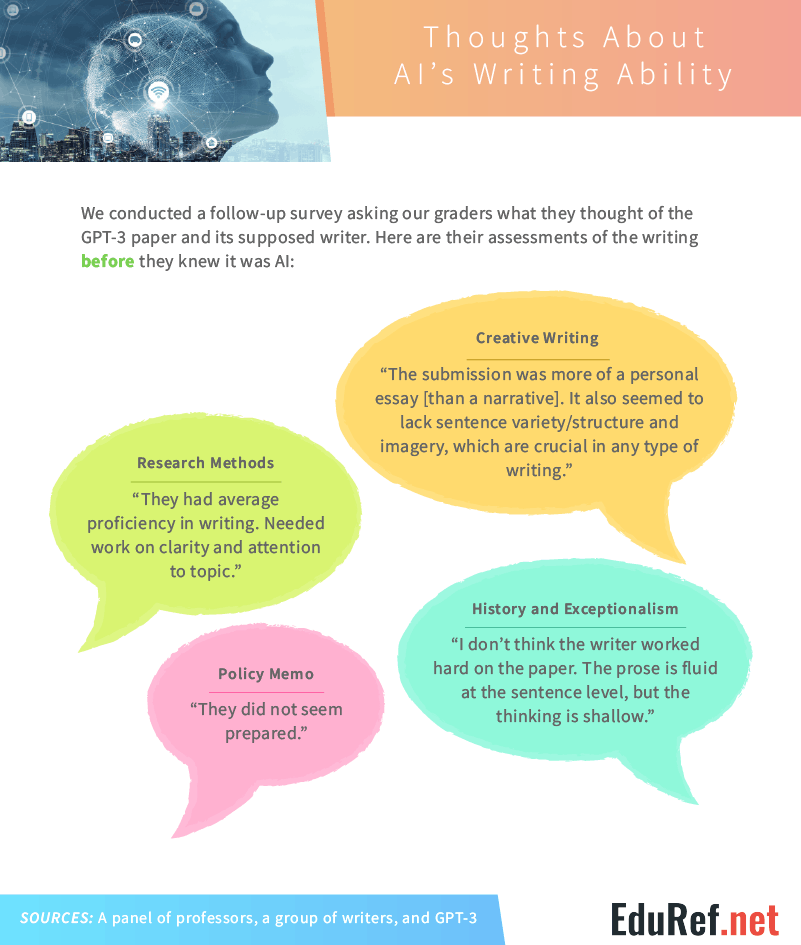

Rubrics aside, professors can tell a lot about a student based on their writing. We asked our graders what they thought of GPT-3’s paper and the “writer” behind it without letting them in on our AI secret. Despite earning a passing grade in research methods, U.S. History, and Law, the professors didn’t have great things to say about GPT-3. The grader for research methods noted that the “student” had average writing proficiency and needed to work on clarity and attention to the topic. While the professor grading the history assignment said GPT-3’s prose was fluid at the sentence level, they also noted that the “writer” failed to think critically.

Failing the creative writing assignment, GPT-3 received tougher feedback from that professor compared to the others. Noting that the content was more of an essay than a narrative, the creative writing grader also pointed out GPT-3’s lack of sentence variety, structure, and imagery – all of which are crucial to creative writing success.

Evolution of Education

Artificial intelligence has come a long way – and still has a long way to go. From virtual assistants, analytics, and security to health care, self-driving cars, and autonomous flying, artificial intelligence is already used across various industries every day. OpenAI’s GPT-3 is the new kid on the block in the technological world, creating human-like content light-years ahead of every software to come before.

Despite its revolutionary output, GPT-3 won’t be earning college degrees on its own anytime soon. When put up against human writers, GPT-3 secured some passing grades but failed to nail creative writing. While its success in numerous topics is promising for the future of AI, it may be a bit problematic for college professors. If AI continues to create human-like content, educators will have to think twice about the writers behind assignments and how writing prompts are developed to best capture human imagination and critical thinking skills.

But for now, educators can focus on teaching living, breathing humans and grading the content they produce. At EduRef.net, our goal is to help educators and students access all the information they need to be successful. From lesson plans to in-depth guides of universities and college courses, we’re a one-stop shop for everything education. Easily browse our collection of resources and tools by visiting us online today.

Methodology and Limitations

For this project, we hired professors to create a writing prompt, grade submissions, and complete a follow-up survey to get as many comments and notes as possible about their grading process and their thoughts about our writers. The submissions came from a dozen freelance writers, either current undergraduate students or self-described undergraduate-level writers, some who were writing in their field of study.

GPT-3 was prompted to produce a paper in each subject, with minimal work beyond entering the professor-supplied prompt. GPT-3 output was lightly edited for length and repetition, but not for content, fact-checking, or grammar. Our analysis was limited to four subject areas, with three to four written submissions each. Analyzed text for GPT-3 included roughly 2,600 words and for humans, roughly 5,500 words. The findings in this article are limited by these small sample sizes and are for exploratory purposes only, and future research should approach this topic in a more rigorous way.

Fair Use Statement

Artificial intelligence has transcended the science fiction world and landed in reality. With new technological advancements happening every day, it can be hard to keep up. Feel free to share this project with friends or readers who may be interested – as long as it’s for noncommercial purposes. All we ask is that you include a link back to this page so our contributors receive proper credit.

Further Resources for College Students

Cheapest Online Colleges

Best Accredited Online Colleges

Online Colleges that Offer Laptops & iPads to Students

Online Schools with Free Laptops

Free Online College Courses

Free Online Colleges